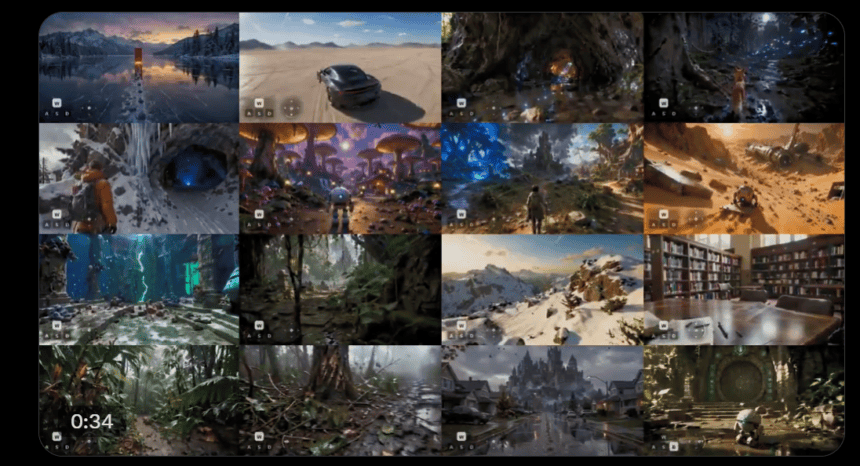

Give SANA-WM one image and a camera path. Thirty-four seconds later, you have a minute-long, 720p video where the camera moves exactly where you told it to go. No multi-GPU cluster. No cloud rental. One consumer graphics card.

That’s the pitch from Nvidia‘s latest open-source release, and the benchmarks back it up.

What SANA-WM does

SANA-WM is a 2.6 billion-parameter world model, a system that takes a single image, a text prompt and a six-degrees-of-freedom (6-DoF) camera trajectory, then synthesizes a photorealistic video that follows that trajectory frame by frame. The output is 720p, runs up to 60 seconds, and maintains camera precision that beats models five times its size.

This isn’t image-to-video generation where you type a prompt and hope for the best. You’re defining the camera path through a 3D-consistent scene. The model tracks rotation, translation and movement with metric-scale accuracy across the full minute.

The single-GPU trick

Most competing world models require eight GPUs just for inference. LingBot-World uses 14 billion parameters across two models on eight GPUs and produces 0.6 videos per hour. HY-WorldPlay needs eight GPUs for 1.1 videos per hour at 480p.

SANA-WM generates 24.1 videos per hour on a single GPU at 720p. With the full refinement pipeline running on eight H100s, throughput hits 22.0 videos per hour, a 36x advantage over LingBot-World at comparable visual quality scores.

The distilled inference variant, running on a single RTX 5090 with NVFP4 quantization, denoises a complete 60-second 720p clip in 34 seconds. Total memory footprint for the full pipeline: 74.7 GB, which fits inside an H100’s 80 GB budget.

How it handles minute-long video without melting

Standard attention in video diffusion models scales quadratically with sequence length. A 60-second video at 720p means 961 latent frames. On a single GPU, standard softmax attention simply runs out of memory.

SANA-WM solves this with a hybrid architecture. Fifteen of its 20 transformer blocks use frame-wise Gated DeltaNet (GDN), a recurrent mechanism that maintains a constant-size memory state regardless of how long the video gets. A decay gate forgets stale frames. A delta-rule correction updates only the difference between what the model predicts and what it needs, not the entire state.

The remaining five blocks use traditional softmax attention, placed at regular intervals to anchor long-range spatial consistency where the recurrent approach alone falls short.

To prevent the gradient instability that killed earlier attempts, the team developed a key-scaling formula (1/√(D·S)) that keeps the transition matrix bounded. Without it, training crashes with NaN errors within the first 16 steps.

Camera control that tracks two timescales

Controlling a camera through a minute-long scene requires precision at two different temporal rates. SANA-WM uses a dual-branch system to handle both.

The coarse branch operates at the latent-frame rate, applying Unified Camera Positional Encoding (UCPE) to capture the global trajectory structure across the full sequence.

The fine branch addresses a compression problem: each latent token represents eight raw video frames, each with its own camera pose. The fine branch computes pixel-wise Plücker ray maps from all eight frames, packs them into a 48-channel tensor, and injects this data after each self-attention output. This recovers the intra-stride camera motion that the coarse branch can’t see.

In ablation tests, UCPE alone achieved a Camera Motion Consistency (CamMC) score of 0.2453. Adding Plücker mixing dropped it to 0.2047, the best among all compared methods.

A second-stage refiner cleans up drift

Raw output from the first stage is spatiotemporally consistent but can develop structural artifacts over long sequences. A second-stage refiner, built on the 17 billion-parameter LTX-2 model with rank-384 LoRA adapters, corrects these issues in just three denoising steps.

The impact is measurable. On hard camera trajectories, visual quality degradation from the first 10 seconds to the last 10 seconds (ΔIQ) dropped from 3.09 to 0.31 after refinement. On simple trajectories, it went from 3.79 to 1.17.

Training took 18.5 days on 64 H100s

The entire training pipeline used 212,975 video clips drawn from seven public and synthetic sources, with metric-scale 6-DoF pose annotations generated by a modified version of the VIPE camera-pose annotation engine.

Training proceeded in four progressive stages over approximately 15 days, preceded by a 3.5-day VAE adaptation step. The process started with short five-second clips to establish the GDN architecture, then scaled to full 60-second sequences with camera control, and finished with distillation to reduce inference to four denoising steps.

Custom fused Triton kernels for GDN scan and gate operations contributed 1.5x to 2x throughput gains throughout training.

Benchmark results against the field

On Nvidia’s purpose-built 60-second benchmark (80 scenes across four categories with simple and hard trajectory splits), SANA-WM with the refiner achieved rotation errors of 4.50° and 8.34° on simple and hard splits respectively, versus 10.47° and 18.99° for LingBot-World. VBench visual quality scores hit 80.62 and 81.89, matching LingBot-World’s 81.82 and 81.89 while outputting at 720p instead of 480p on a single GPU instead of eight.

The model has acknowledged limitations. There’s no explicit 3D scene memory, which means it can drift in dynamic scenes or unusual viewpoints. The authors suggest using the fast stage-one model to search through trajectory options, then selectively running the refiner on promising results.

Open source under Apache 2.0

SANA-WM is available through the NVlabs/Sana GitHub repository with Apache 2.0 licensing for the code. Individual dataset and model weight licenses vary. The repo also hosts SANA, SANA-1.5, SANA-Sprint, and SANA-Video.

Three inference variants ship with the release: a bidirectional generator for highest-quality offline synthesis, a chunk-causal autoregressive generator for sequential streaming, and the distilled autoregressive variant that hits the 34-second mark on a single RTX 5090.